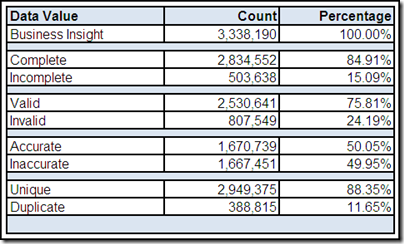

Ensuring that complete and accurate data is being used to make critical daily business decisions is perhaps the primary reason why data quality is so vitally important to the success of your organization.

However, this effort can sometimes take on a life of its own, where achieving complete and accurate data is allowed to become the raison d'être of your data management strategy—in other words, you start managing data for the sake of managing data.

When this phantom menace clouds your judgment, your data might be complete and accurate—but useless to your business.

Completeness and Accuracy

How much data is necessary to make an effective business decision? Having complete (i.e., all available) data seems obviously preferable to incomplete data. However, with data volumes always burgeoning, the unavoidable fact is that sometimes having more data only adds confusion instead of clarity, thereby becoming a distraction instead of helping you make a better decision.

Returning to my original question, how much data is really necessary to make an effective business decision?

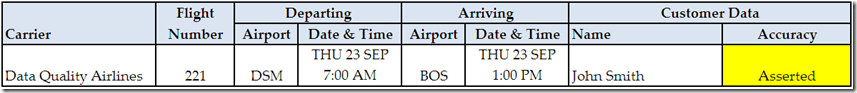

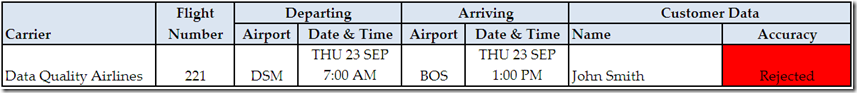

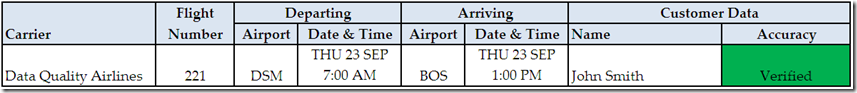

Accuracy, which, thanks to substantial assistance from my readers, was defined in a previous post as both the correctness of a data value within a limited context such as verification by an authoritative reference (i.e., validity) combined with the correctness of a valid data value within an extensive context including other data as well as business processes (i.e., accuracy).

Although accurate data is obviously preferable to inaccurate data, less than perfect data quality can not be used as an excuse to delay making a critical business decision. When it comes to the quality of the data being used to make these business decisions, you can’t always get the data you want, but if you try sometimes, you just might find, you get the business insight you need.

Data-driven Solutions for Business Problems

Obviously, there are even more dimensions of data quality beyond completeness and accuracy.

However, although it’s about more than just improving your data, data quality can be misperceived to be an activity performed just for the sake of the data. When, in fact, data quality is an enterprise-wide initiative performed for the sake of implementing data-driven solutions for business problems, enabling better business decisions, and delivering optimal business performance.

In order to accomplish these objectives, data has to be not only complete and accurate, as well as whatever other dimensions you wish to add to your complete and accurate definition of data quality, but most important, data has to be useful to the business.

Perhaps the most common definition for data quality is “fitness for the purpose of use.”

The missing word, which makes this definition both incomplete and inaccurate, puns intended, is “business.” In other words, data quality is “fitness for the purpose of business use.” How complete and how accurate (and however else) the data needs to be is determined by its business use—or uses since, in the vast majority of cases, data has multiple business uses.

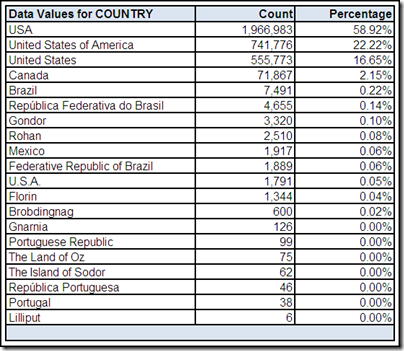

Data, data everywhere

With silos replicating data as well as new data being created daily, managing all of the data is not only becoming impractical, but because we are too busy with the activity of trying to manage all of it, no one is stopping to evaluate usage or business relevance.

The fifth of the Five New Ideas From 2010 MIT Information Quality Industry Symposium, which is a recent blog post written by Mark Goloboy, was that “60-90% of operational data is valueless.”

“I won’t say worthless,” Goloboy clarified, “since there is some operational necessity to the transactional systems that created it, but valueless from an analytic perspective. Data only has value, and is only worth passing through to the Data Warehouse if it can be directly used for analysis and reporting. No news on that front, but it’s been more of the focus since the proliferation of data has started an increasing trend in storage spend.”

In his recent blog post Are You Afraid to Say Goodbye to Your Data?, Dylan Jones discussed the critical importance of designing an archive strategy for data, as opposed to the default position many organizations take, where burgeoning data volumes are allowed to proliferate because, in large part, no one wants to delete (or, at the very least, archive) any of the existing data.

This often results in the data that the organization truly needs for continued success getting stuck in the long line of data waiting to be managed, and in many cases, behind data for which the organization no longer has any business use (and perhaps never even had the chance to use when the data was actually needed to make critical business decisions).

“When identifying data in scope for a migration,” Dylan advised, “I typically start from the premise that ALL data is out of scope unless someone can justify its existence. This forces the emphasis back on the business to justify their use of the data.”

Data Memorioso

Funes el memorioso is a short story by Jorge Luis Borges, which describes a young man named Ireneo Funes who, as a result of a horseback riding accident, has lost his ability to forget. Although Funes has a tremendous memory, he is so lost in the details of everything he knows that he is unable to convert the information into knowledge and unable, as a result, to grow in wisdom.

In Spanish, the word memorioso means “having a vast memory.” When Data Memorioso is your data management strategy, your organization becomes so lost in all of the data it manages that it is unable to convert data into business insight and unable, as a result, to survive and thrive in today’s highly competitive and rapidly evolving marketplace.

In their great book Made to Stick: Why Some Ideas Survive and Others Die, Chip Heath and Dan Heath explained that “an accurate but useless idea is still useless. If a message can’t be used to make predictions or decisions, it is without value, no matter how accurate or comprehensive it is.” I believe that this is also true for your data and your organization’s business uses for it.

Is your data complete and accurate, but useless to your business?

Last week, when I published my blog post

Last week, when I published my blog post

Understanding your

Understanding your